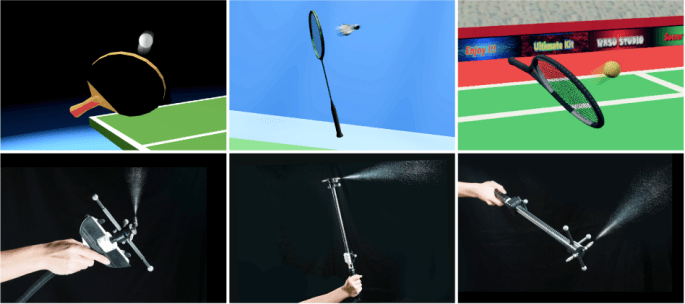

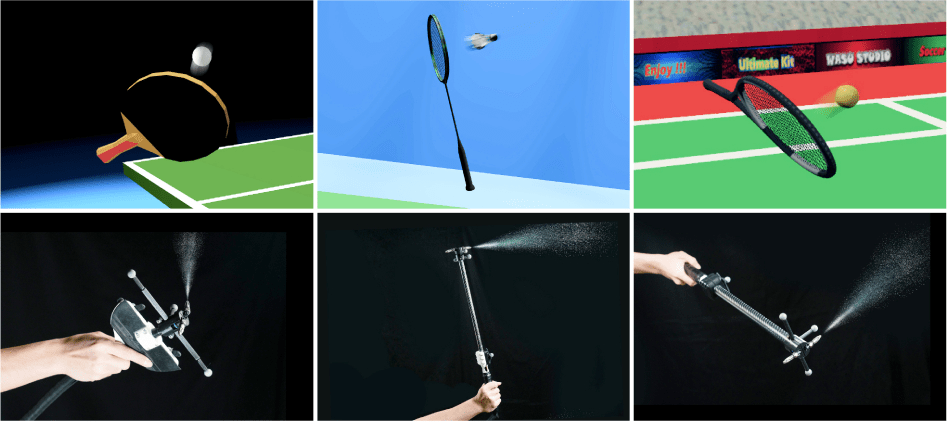

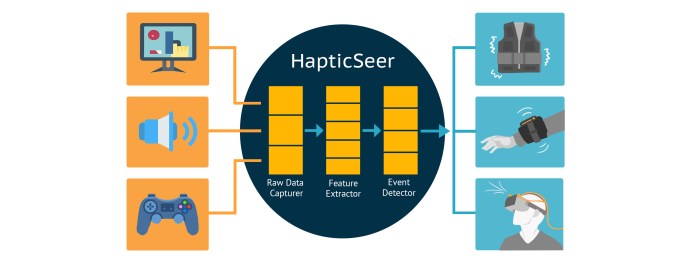

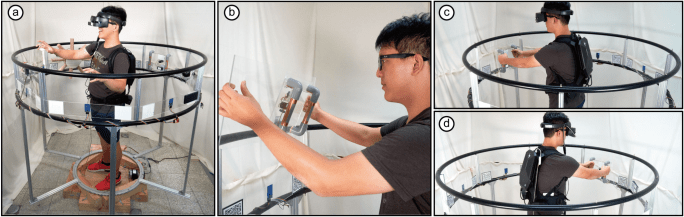

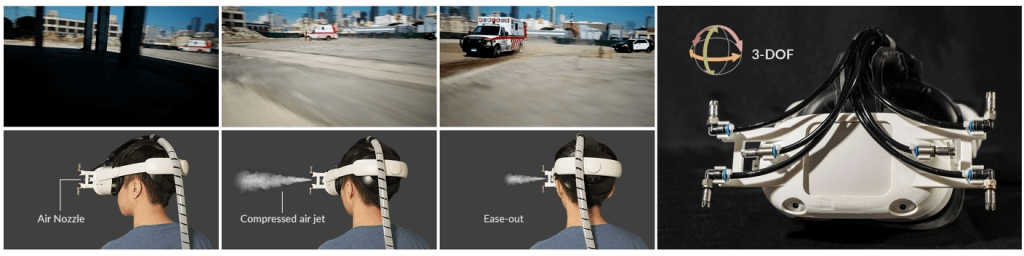

First-Person View (FPV) drone is a recently developed category of drones designed for precision flying and for capturing exhilarating experiences that could not be captured before, such as navigating through tight indoor spaces and flying extremely close to subjects of interest. FPV viewing experiences, while exhilarating, typically have frequent rotations that can lead to visually induced discomfort. We present TurnAhead, which uses 3-DoF rotational haptic cues that correspond to camera rotations to improve the comfort, immersion, and enjoyment of FPV experiences. It uses headset-mounted air jets to provide ungrounded rotational forces and is the first device to support rotation around all 3 axes: yaw, pitch, and roll. We conducted a series of perception and formative studies to explore the design space of timing and intensity of haptic cues, followed by user experience evaluation, for a combined total of 44 participants (n=12, 8, 6, 18). Results showed that TurnAhead significantly improved overall comfort, immersion, and enjoyment, and was preferred by 89% of participants.

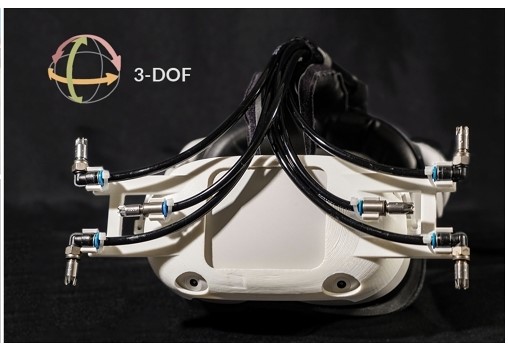

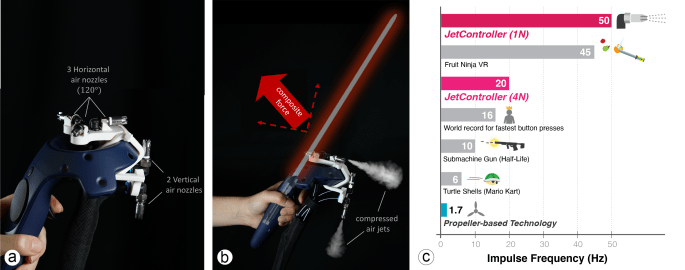

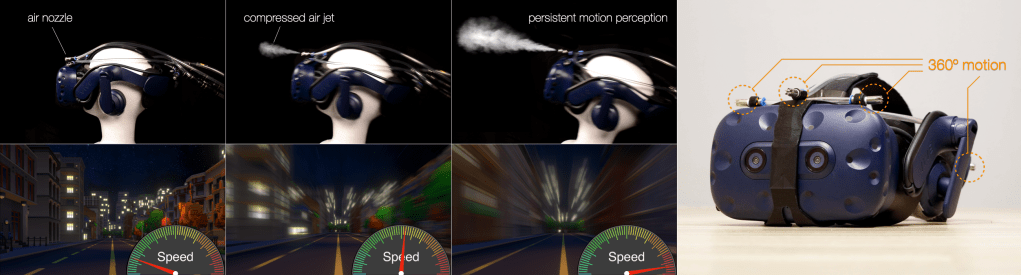

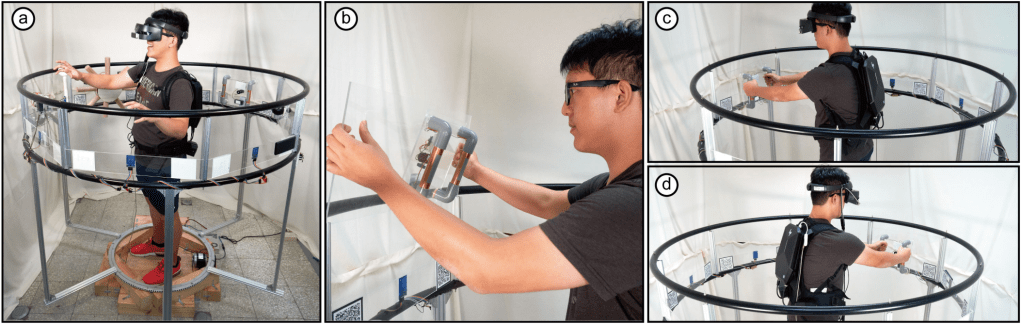

(a) TurnAhead explores the design space of applying 3-DoF rotational haptic cues to the head to improve comfort, immersion, and enjoyment of first-person viewing (FPV) experiences. In this example, a user is viewing a car chase scene from the movie Ambulance (2022) shot by FPV drones as the camera rotates to the right; (b) Our device consists of 6 air nozzles that are mounted on the front of VR headset and placed tangent to the head, and is the first wearable device capable of generating rotational forces to turn left/right (yaw axis) that is in 84% of the rotations in FPV footage.

Links

[CHI’23 Full Paper] Honorable Mention Award🏆 TurnAhead: Designing 3-DoF Rotational Haptic Cues to Improve First-person Viewing (FPV) Experiences

Bo-Cheng Ke, Min-Han Li, Yu Chen, Chia-Yu Cheng, Chiao-Ju Chang, Yun-Fang Li, Shun-Yu Wang, Chiao Fang, Mike Y. Chen. 2023. CHI ’23: Proceedings of the 2023 CHI Conference on Human Factors in Computing Systems. Association for Computing Machinery, New York, NY, USA. Article No.: 401, Pages 1 – 15.