Deaf and hard-of-hearing (DHH) individuals encounter difficulties when engaged in group conversations with hearing individuals, due to factors such as simultaneous utterances from multiple speakers and speakers whom may be potentially out of view.

We interviewed and co-designed with eight DHH participants to address the following challenges:

1)~associating utterances with speakers,

2)~ordering utterances from different speakers,

3)~displaying optimal content length, and

4)~visualizing utterances from out-of-view speakers.

We evaluated multiple designs for each of the four challenges through a user study with twelve DHH participants.

Our study results showed that participants significantly preferred speech bubble visualizations over traditional captions.

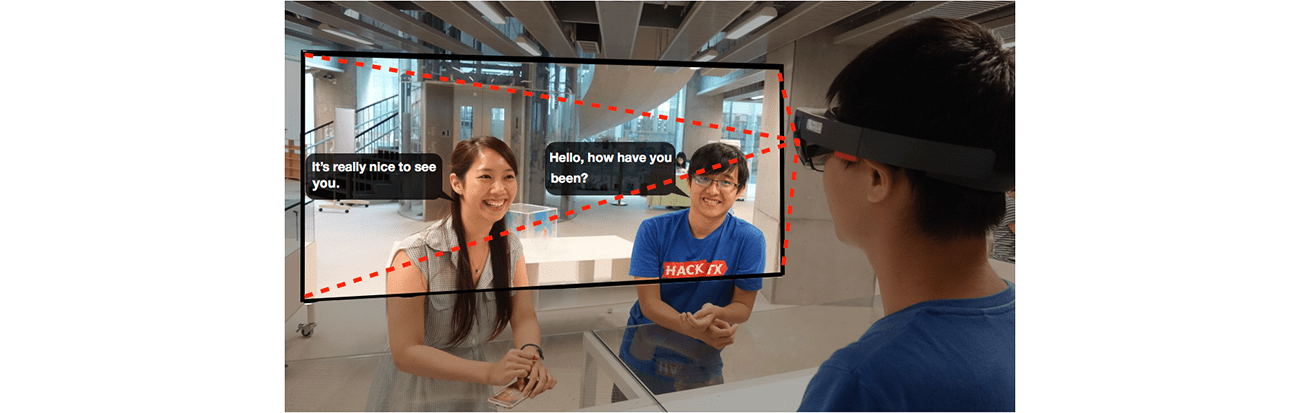

These design preferences guided our development of SpeechBubbles, a real-time speech recognition interface prototype on an augmented reality head-mounted display.

From our evaluations, we further demonstrated that DHH participants preferred our prototype over traditional captions for group conversations.

Yi-Hao Peng, Ming-Wei Hsi, Paul Taele, Ting-Yu Lin, Po-En Lai, Leon Hsu, Tzu-chuan Chen, Te-Yen Wu, Yu-An Chen, Hsien-Hui Tang, and Mike Y. Chen. 2018. SpeechBubbles: Enhancing Captioning Experiences for Deaf and Hard-of-Hearing People in Group Conversations. In Proceedings of the 2018 CHI Conference on Human Factors in Computing Systems (CHI ’18). Association for Computing Machinery, New York, NY, USA, Paper 293, 1–10.

DOI: https://doi.org/10.1145/3173574.3173867