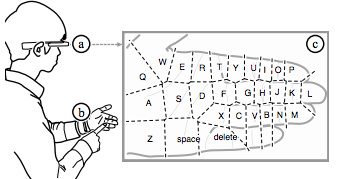

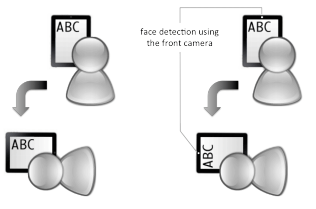

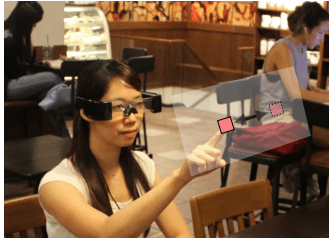

Smart glasses, such as Google Glass, provide always-available displays not offered by console and mobile gaming devices, and could potentially offer a pervasive gaming experience. However, research on input for games on smart glasses has been constrained by the available sensors to date. To help inform design directions, this paper explores user-defined game input for smart glasses beyond the capabilities of current sensors, and focuses on the interaction in public settings. We conducted a user-defined input study with 24 participants, each performing 17 common game control tasks using 3 classes of interaction and 2 form factors of smart glasses, for a total of 2448 trials. Results show that users significantly preferred non-touch and non-handheld interaction over using handheld input devices, such as in-air gestures. Also, for touch input without handheld devices, users preferred interacting with their palms over wearable devices (51% vs 20%). In addition, users preferred interactions that are less noticeable due to concerns with social acceptance, and preferred in-air gestures in front of the torso rather than in front of the face (63% vs 37%).

User-Defined Game Input for Smart Glasses in Public Space

Ying-Chao Tung, Chun-Yen Hsu, Han-Yu Wang, Silvia Chyou, Jhe-Wei Lin, Pei-Jung Wu, Andries Valstar, and Mike Y. Chen. 2015. User-Defined Game Input for Smart Glasses in Public Space. In Proceedings of the 33rd Annual ACM Conference on Human Factors in Computing Systems (CHI ’15). Association for Computing Machinery, New York, NY, USA, 3327–3336.

DOI: https://doi.org/10.1145/2702123.2702214